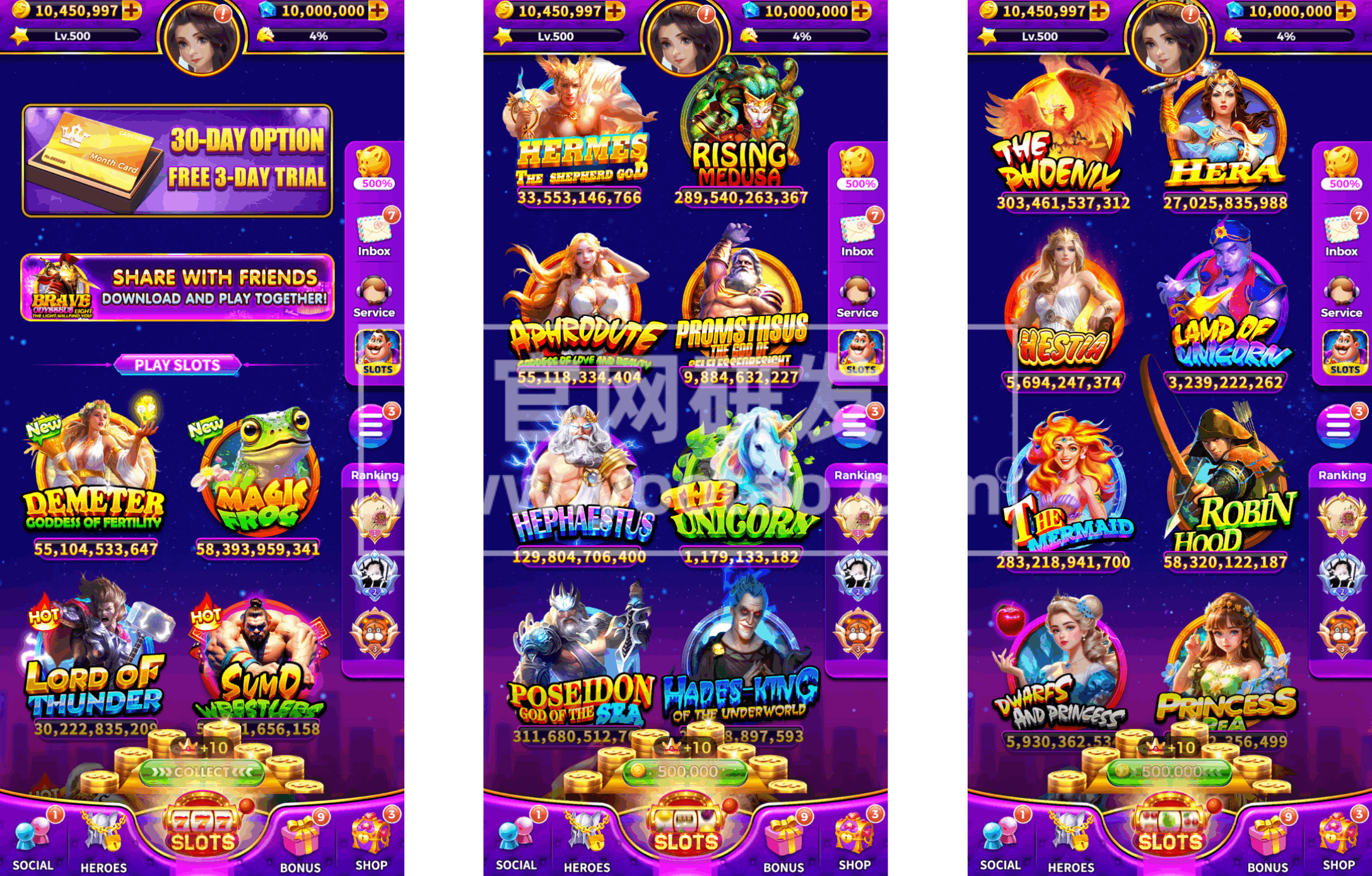

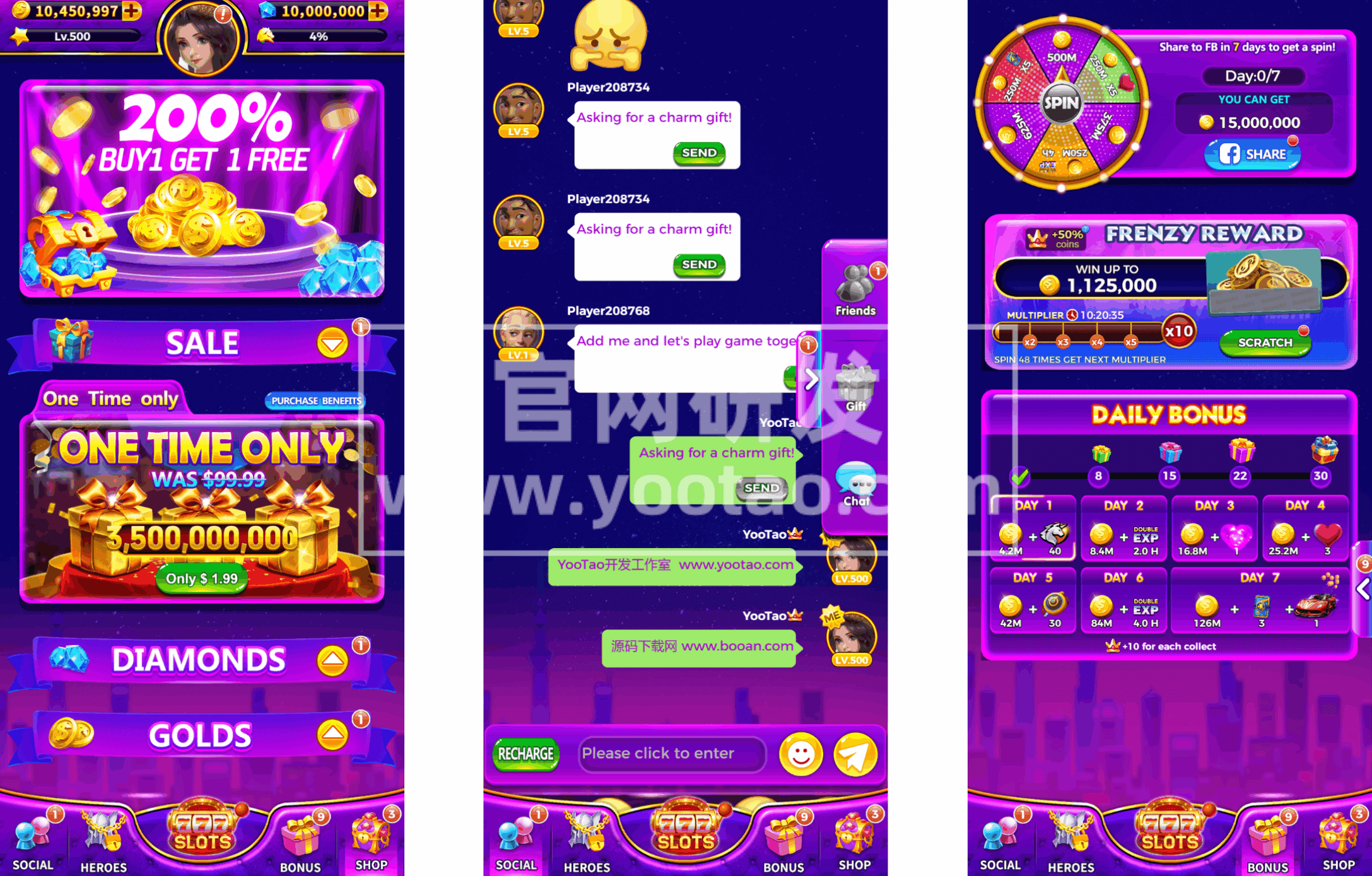

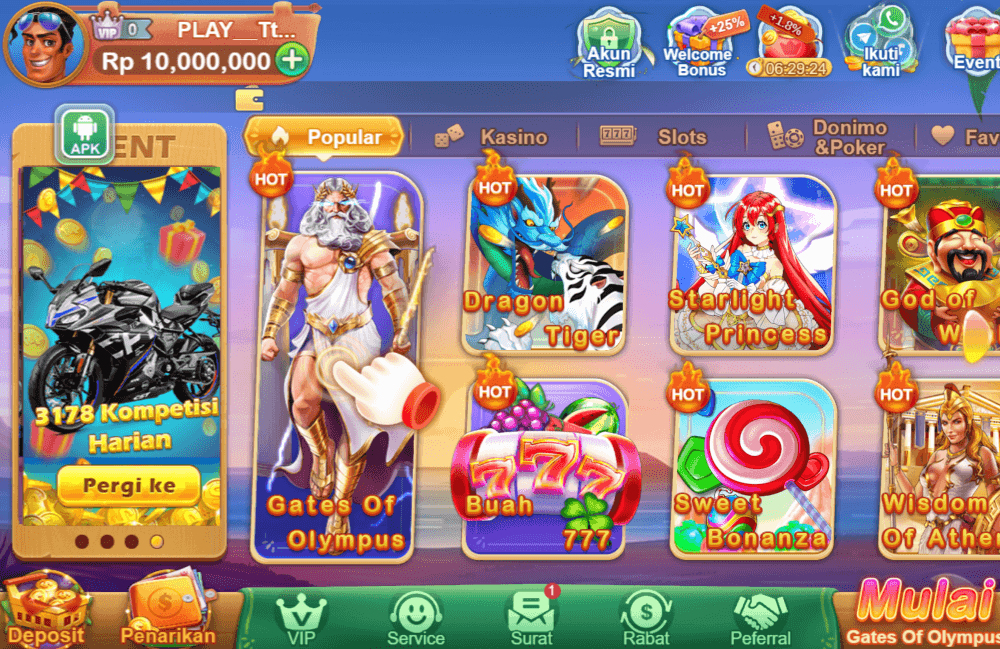

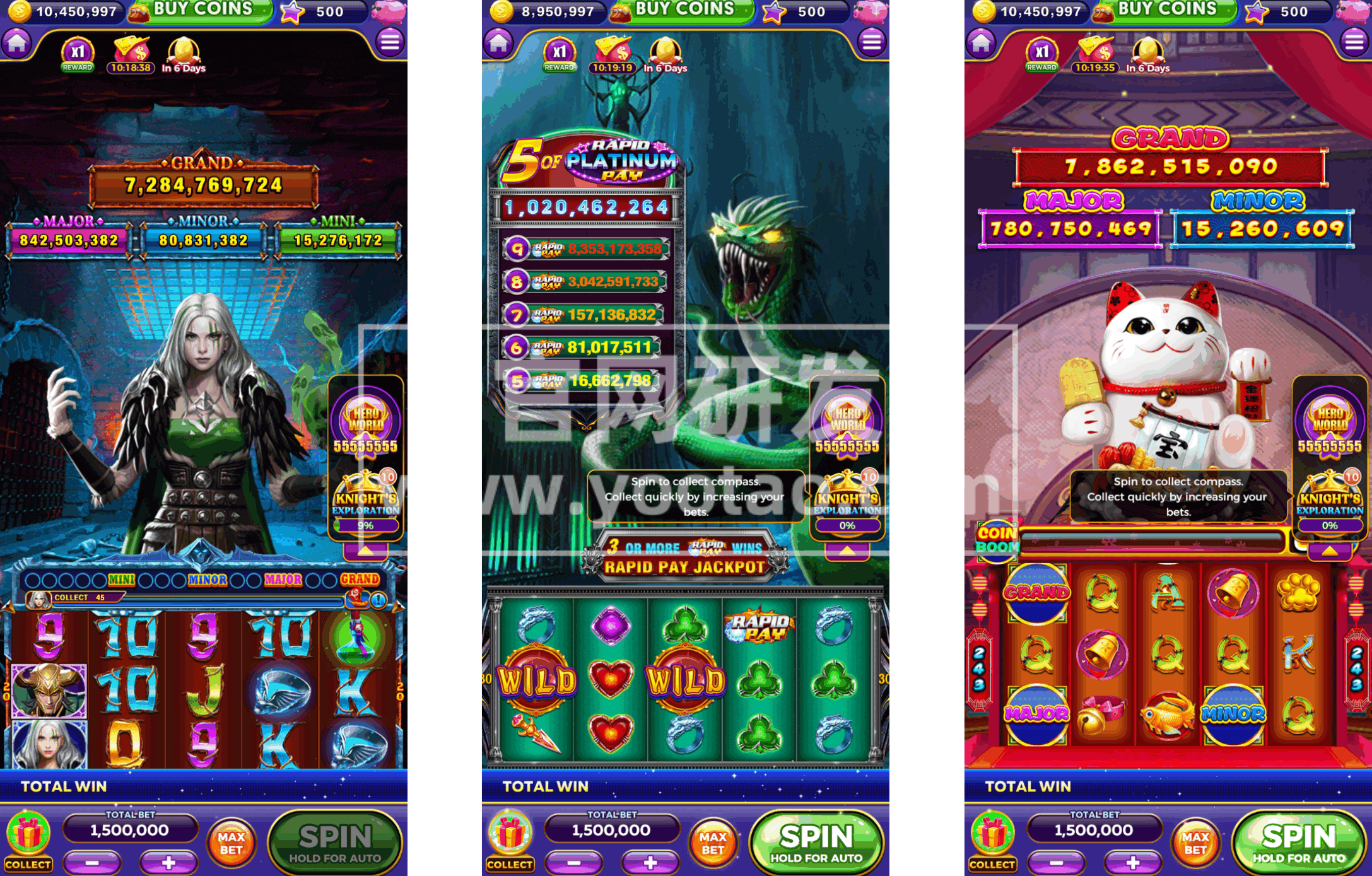

长期以来源码就有着不凡的发展速度,相信未来也是一如既往,势如破竹。我们提供全面的slots源码,棋牌源码,网站源码,满足各类游戏开发需求。https://www.yootao.com/

According to a report by Russian Satellite News Agency on March 17, government officials in the Russian-controlled Zaporozhye region told reporters on the 17th that an assassination attempt against a close friend of Russian President Vladimir Putin was foiled in the city of Berdyansk in the region.

According to reports, Vladimir Rogov, a senior official of the local administration in Zaporozhye, revealed that the authorities had stopped an assassination plot involving a confidant of Russian President Vladimir Putin.

He said: Ukraine terrorists planned the attack but did not succeed. Their criminal plans were thwarted by professional actions by the Russian secret service.

Rogoff added that all those involved in the planning of the terrorist attack will be hunted down.

The 17th is the last day of voting in the Russian presidential election. (Compiled by Wei Yudong)

Related news reports:

Russia announces: repelled all cross-border attacks, killing 550 people in Uzbekistan

Reference News Report on March 17 According to a report by Russian Satellite News Agency on March 17, Russian Presidential Press Secretary Dmitry Peskov told reporters that the military would routinely report Uzbekistan’s attacks on Russian territory to President Putin.

He pointed out: Putin received reports from the military that Ukrainian troops recently attempted to attack Russian territory in Belgorod and Kursk regions.

Reported that Peskov said that all attacks were successfully repelled, while 1700 of the 2500 saboteurs were killed and 550 people were killed.

He also said that in the early morning of the 16th, the enemy also tried several times to invade Belgorod and Kursk regions. The Ukraine saboteurs ultimately failed and failed even to get close to the Russian border. Peskov said that the enemy lost nearly 70% of its personnel.

The report also said that on the 16th, Russian troops thwarted Ukrainian saboteurs ‘attempts to cross the border from the direction of Popovka, a residential area in Sumizhou, and from Spodaliushno and Kozinka in Belgorod Oblast. The Ukrainian army lost about 30 soldiers, 3 tanks, 2 armored fighting vehicles, a Czech-made vampire multiple rocket launcher and a Hail multiple rocket launcher under bombing and shelling.